IBM Storewize and FlashSystem Storage Upgrades

07/26/2022

Introduction

In an Enterprise setting upgrading storage infrastructure is quite different from running updates on your home PC; or at least it should be. While updates expand functionality, simplify interfaces, fix bugs and close vulnerabilities they can also introduce new bugs and vulnerabilities. Sometimes the new bugs are contingent upon factors which exist in your environment and can result in encountering the issue the bug creates. In an Enterprise environment where many users and sometimes customers rely upon the storage infrastructure the impact of an issue caused by an upgrade can be broad and affect business credibility with potentially even legal ramifications. Therefore, having a process to mitigate as many risks as possible is a necessity. The process presented here rests in a general framework with specific steps related to IBM Midrange StoreWize V5000, V7000 and Flash Storage.

High-level Overview

The process described at a high-level here is a good general framework for any shared infrastructure upgrade in an Enterprise environment.

- Planning

- Document current environment cross section from CMDB and/or direct system inquiry.

(Server Hardware Model, OS and Adapter Model/Firmware/Driver as well as SAN Switch Model/Firmware and current Storage Model/Code Level) - Ensure the SAN infrastructure is under vendor support so that code may be downloaded, and support may be engaged if any problems are encountered.

- Download and Review Release notes for the top 3 recent code releases.

- Use vendor interoperability documents or web applications to validate supportability in your environment using the information gathered above.

- Choose the target code level. (Often N-1 is preferred over N, bleeding edge latest releases, unless significant vulnerabilities or incompatibility with your environment exists.)

- Document current environment cross section from CMDB and/or direct system inquiry.

- Preparation

- Download the target release installation code and any upgrade test utilities provided by the vendor.

- Upload the target code and test utility and run test utility.

- Run initial health checks on the storage systems.

- Gather connectivity information from SAN and Storage devices and verify connection and path redundancy.

- Initiate a resolution plan before scheduling the upgrade for any identified issues.

- Submit change control and obtain approval for upgrade.

- Upgrade

- Rerun the upgrade test utility to verify issues are still resolved.

- Run configuration backup, diagnostic snapshot and list logs to a file downloading each to a central configuration repository.

- Perform health checks

- Clear logs and clean diagnostic snapshots

- Initiate any prerequisite components microcode upgrades (drive firmware, etc) and validate completion.

- Initiate system update and monitor upgrade process

- Upon completion validate upgrade, perform health checks and validate the dependent systems connectivity.

Upgrade Planning

The first step is to identify all Storewize and FlashSystem Storage arrays by querying CMDB or inventory lists and document them along with their current code levels.

| Array Name | IP Address | Location | SN | Model-Type | CodeLevel |

Next query these systems for lists of the hosts attached to them and import this list into a spreadsheet. Then query the cmdb to obtain a list of the system in the environment along with their OS and hardware model information and pull this information into the same spreadsheet. Cross reference between these lists and then create a report by OS and Hardware. Further from SAN inventory query which SAN switches the storage is attached through. The following code may be pasted into a FlashSystem console to produce a list of hosts attached.

echo "FS9Name|Host|PortCnt|Type|IoGCnt|Status|Logins|State|HostWWpn|HostNport|Node|NodeWWpn|NodePort|NodeNport|NodePortState"

lshost -nohdr | while read -a Hosts ; do

lshost ${Hosts[1]} | while read -a HstDtl ; do

if [[ ${HstDtl[0]} =~ ^name ]] ; then ThsHst=${HstDtl[1]} ; fi

if [[ ${HstDtl[0]} =~ ^port_count ]] ; then ThsPrt=${HstDtl[1]} ; fi

if [[ ${HstDtl[0]} =~ ^type ]] ; then ThsTyp=${HstDtl[1]} ; fi

if [[ ${HstDtl[0]} =~ ^iogrp_count ]] ; then ThsIct=${HstDtl[1]} ; fi

if [[ ${HstDtl[0]} =~ ^status ]] ; then ThsSts=${HstDtl[1]} ; fi

if [[ ${HstDtl[0]} =~ ^WWPN ]] ; then ThsWpn=${HstDtl[1]} ; fi

if [[ ${HstDtl[0]} =~ ^node_logged_in_count ]] ; then ThsLgn=${HstDtl[1]} ; fi

if [[ ${HstDtl[0]} =~ ^state ]] ; then ThsSta=${HstDtl[1]}

if [[ ${ThsSta} == offline ]] ; then

printf "${ThsHst}|${ThsPrt}|${ThsTyp}|${ThsIct}|${ThsSts}|${ThsLgn}|${ThsSta}|${ThsWpn}|NoPort|NoNode|NoWWpn|-1||offline\n"

else

lsfabric840 -nohdr | grep -e"${ThsWpn}" | while read -a FB ; do

printf "${ThsHst}|${ThsPrt}|${ThsTyp}|${ThsIct}|${ThsSts}|${ThsLgn}|${ThsSta}|${ThsWpn}|${FB[1]}|${FB[3]}|${FB[4]}|${FB[5]}|${FB[6]}|${FB[7]}\n"

done

fi

fi

done

done

HBAs

| Model | Vend:Dev | Description |

| FC 5273 | 10DF:F100 | Emulex LPe12002 PCIe LP 8 Gb 2-Port Fibre Channel Adapter |

| FC 5735 | 10DF:F100 | Emulex LPe12002 PCIe 8 Gb PCI Express Dual-port Fibre Channel Adapter |

- Servers, Host OS, and SAN

| Component | Type | Vendor | Model-Type | Min/Crnt Code | HBA-Driver |

| Power VC | App | IBM | 1.2.2.3 | ||

| HMC | Appliance | IBM | 7042-CR7 | 8.2.0 | |

| Power 750 | Server | IBM | 8408-E8D | VIOS 2.2.3.52 VIOS 2.2.4.22 | 10DF:F100-202307 10DF:F100-203305 |

| Power 750e | Server | IBM | 8233-E8B | No VIOS | |

| Power S824 | Server | IBM | 8286-42A | ||

| RedHat | OS | RedHat | ES 6 | 6.6 | |

| MDS9148 | Switch | Cisco | 9148 | 8.4(2c) |

From IBM fix central look up the latest code level and download the release notes for the top three latest code levels. Review the defects fixed by the releases and the upgrade paths required. If you are coming from older versions of code then you may need to apply intermediate code levels before the final target code level and note these in your table of storage infrastructure to upgrade.

https://www.ibm.com/support/fixcentral

Review security bulletins to see if there are any issues or vulnerablities which would necessitate a particular target code level.

https://www.ibm.com/support/pages/ibmsearch?tc=STKMQB&dc=DD200+D600+DB600

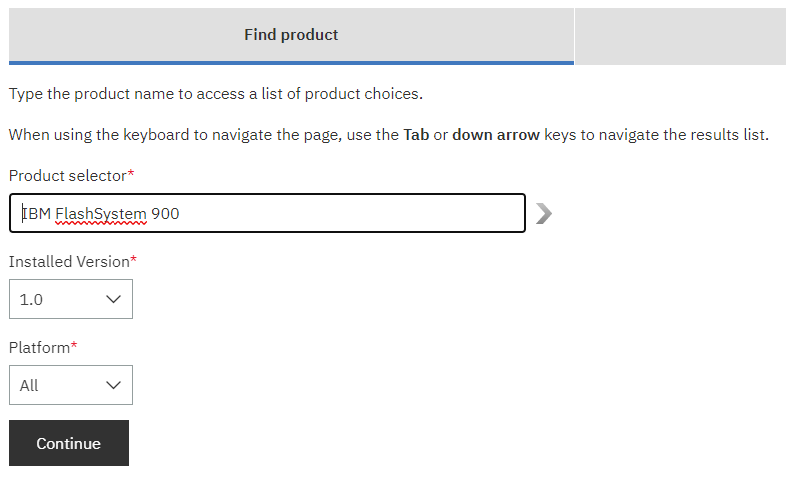

Use IBM Storage Interoperability web application to specify the FlashSystem along with the SAN Switch and the platforms discovered in previous steps into the web UI. Review the returned results for any known issues that may impact the target environment. Then download them in spreadsheet format to keep as an artifact. Based upon the interoperability, upgrade paths and defects addressed chose the target code level. A good rule of thumb is to choose the N – 1 code level.

https://www-03.ibm.com/systems/support/storage/ssic/interoperability.wss

Upgrade Preparation

Download the target release code as well as any test utilities and store them in a central repository used by the Storage Infrastructure team. Review the upgrade procedures for the target code level and the release notes and make note of any unique requirements or modifications to the general procedure that may be required. Review implementation documentation host connectivity guidelines and note any requirements which may impact how current hardware is configured such as minimal HBA code level, SCSI queue depth settings, etc.

https://www.ibm.com/docs/en/flashsystem-900

Non-production, Lab and backup storage arrays should be targeted for upgrade first. Schedule the upgrades for periods of less IO activity. Add a target upgrade date to the table storage arrays previously created. Upload the code and test utility to each Storewize or FlashSystem array and install the test utility. Execute the test utility to check if there are any issues which need to be resolved before upgrading. The issues may include drive firmware updates which would require downloading drive firmware or significant hardware failures which need to be addressed before updating.

## Upload test utility and upgrade code

scp TMS9840_INSTALL_svcupgradetest_1.54_74 {user}@{FlashIP}:/home/admin/upgrade

scp IBM9840_INSTALL_1.6.1.4 {user}@{FlashIP}:/home/admin/upgrade

## Upgrade Test

lsdumps -prefix /home/admin/upgrade

applysoftware -file TMS9840_INSTALL_svcupgradetest_1.54_74

svcupgradetest -v 1.6.1.4

## Error Checking

finderr

lseventlog -order severity -alert yes -message no -monitoring no -fixed no

svcinfo lssoftwareupgradestatus

## Check for any component failures

lsdrive -filtervalue status=degraded ; lsdrive -filtervalue status=offline ; lsenclosure -filtervalue status=degraded ; lsenclosure -filtervalue status=offline ; lsenclosurepsu -filtervalue status=degraded ; lsenclosurepsu -filtervalue status=offline ; lsenclosurebattery -filtervalue status=degraded ; lsenclosurebattery -filtervalue status=offline ; lsenclosure -filtervalue status=degraded ; lsenclosure -filtervalue status=offline ; lsnodecanister -filtervalue status=offline ; lsnodecanister -filtervalue status=degraded ; lsarray -filtervalue raid_status=offline ; lsarray -filtervalue raid_status=syncing ; lsportfc -filtervalue status=inactive_configured

Verify Host Connectivity

- Check each hosts connection to the fabric to ensure that its ports are healthy and not in a degraded state. Also each host connection should be in its own zone with the StoreWize or FlashSystem targets and not in a ALL_IN_ONE zone. If the initiators are together in a zone when a node is brought down for upgrade the initiators may affect one another with broadcasts as they go through recovery operations.

- On each host in the list ensure that the multipath is up and running and verify that the settings match the recommendations from the Host attachment guide. Typically this means adding the no_path_retry, rr_min_io_rq and dev_loss_tmo parameters to the multipath.conf file and creating the udev rules file; 99-ibm-2145.rules as well as setting the scsi timeout for the current running OS using echo 120 > /sys/block/{sd disk}/device/timeout.

- The following scripts may be pasted into a StoreWize or FlashSystem console to list host connection layout and status

StoreWize Connectivity Script

svcinfo lshost -nohdr|while read -a host

do

printf "\n%-20s\n" ${host[1]}

svcinfo lshost ${host[1]} |while read -a wwpn

do

if [[ ${wwpn[0]} == WWPN ]]

then

printf "${host[1]} : ${wwpn[1]} "

for node in `svcinfo lsnode -nohdr |while read -a node ; do echo $node; done`

do

for port in 1 2 3 4

do

printf "-"

svcinfo lsfabric -nohdr -wwpn ${wwpn[1]} |while read -a fabric

do

[[ ${fabric[2]} == $node && ${fabric[5]} == $port ]] && printf "\b\033[01;3%dm$port\033[0m" $((${#fabric[7]}/2-1))

done

done

printf " "

done

printf "\n"

fi

done

done

FlashSystem Connectivity Script

svcinfo lshost -nohdr|while read -a host

do

printf "\n%-20s\n" ${host[1]}

svcinfo lshost ${host[1]} | grep WWPN |while read -a wwpn

do

WWPNSTATE=$(lshost -delim : ${host[1]} | grep -Ee'WWPN|state' | grep -A 1 ${wwpn[1]} | grep state | cut -d : -f 2)

printf "${host[1]} : ${wwpn[1]} "

for node in `svcinfo lsnode -nohdr |while read -a node ; do echo $node; done`

do

for adpt in 1 2

do

for port in 1 2 3 4

do

printf "-"

svcinfo lsfabric840 -nohdr | grep ${wwpn[1]} |while read -a fabric

do

[[ ${fabric[2]} == $node && ${fabric[3]} == $adpt && ${fabric[4]} == $port ]] && printf "\b\033[01;3%dm$port\033[0m" $((${#WWPNSTATE}/2-1))

done

done

printf " "

done

printf " "

done

printf "\n"

done

done

NODE 1 NODE 2

A1 A2 A1 A2

host11

host11 : 100050106DE85F17 ---- -2-- ---- -2--

host11 : 100050106DE85F16 ---- 1--- ---- 1---

host11 : 100050106DE85F47 -2-- ---- -2-- ----

host11 : 100050106DE85F46 1--- ---- 1--- ----

host12

host12 : 100050106DE85F3F ---- -2-- ---- -2--

host12 : 100050106DE85F3E ---- 1--- ---- 1---

host12 : 100050106DE88FF7 -2-- ---- -2-- ----

host12 : 100050106DE88FF6 1--- ---- 1--- ----

Resolve any issues with host connectivity before scheduling the storage for upgrade.

Upgrade Implementation

1. Verify Status of Drives, Enclosures and host connections to the Array

At the time of upgrade re-verify that all components are functioning as expected; do not continue if there are any new or pre-existing component failures. Issue the commands for drives and the enclosures filtering on a status of offline or errored. Check if any fibre channel ports are down with lsportfc. Check if any arrays raid protection is still syncing due to a previous drive failure with lsarray. Then use finderr to see if there are any unresolved errors on the array. Check the event log using the following command if any errors are listed. Check that no software upgrades are currently running.

lsdrive -filtervalue status=degraded ; lsdrive -filtervalue status=offline ; lsenclosure -filtervalue status=degraded ; lsenclosure -filtervalue status=offline ; lsenclosurepsu -filtervalue status=degraded ; lsenclosurepsu -filtervalue status=offline ; lsenclosurebattery -filtervalue status=degraded ; lsenclosurebattery -filtervalue status=offline ; lsenclosure -filtervalue status=degraded ; lsenclosure -filtervalue status=offline ; lsnodecanister -filtervalue status=offline ; lsnodecanister -filtervalue status=degraded ; lsarray -filtervalue raid_status=offline ; lsarray -filtervalue raid_status=syncing ; lsportfc -filtervalue status=inactive_configured

finderr

lseventlog -order severity -alert yes -message no -monitoring no -fixed no

svcinfo lssoftwareupgradestatus

2. Connectivity should be re-verified at the time of the upgrade to ensure that no paths have been lost. Use the Storwize or FlashSystem script previously list above to visually verify that each host has redundant connections to each node.

3. Backup the configuration and take a snapshot

## Clean up old config backups and diagnostic snaps

svcconfig clear -all

svc_snap clean

## Take new backup and diagnostic snap

svcconfig backup

svc_snap -c

lsdumps -prefix /dumps

## Use scp to copy the files down to storage repository

scp {user}@${FLSHIP}:/dumps/svc.config.backup.* /{repo}/flsh/bkup/${FLSHNAME}

scp {user}@${FLSHIP}:/dumps/snap.*.tgz /{repo}/flsh/bkup/${FLSHNAME}

4. If a drive upgrade is requires see Appendix A for the steps to perform a drive upgrade.

5. If not already copied copy the upgrade files to the v7000 and verify that the files were successfully uploaded. Check that the files are present on the nodes

lsdumps -prefix /home/admin/upgrade

6. If not already installed, install the upgrade test utility. Issue the upgrade test and review the output to check whether there have been any problems found by the utility. The output from the command will either state that there have been no problems found, or will direct you to details about any known issues which have been discovered on the system. If it has been determined that the current back-level of drive firmware is still supported the upgrade may proceed.

applysoftware -file TMS9840_INSTALL_svcupgradetest_1.54_74

svcupgradetest -v 1.6.1.4

7. Prior to issuing the upgrade run these health checks to see a before picture of the array. If there are any hosts offline you need to know this beforehand to compare it to health checks run during the upgrade. Check if any fibre channel ports are down with the lsportfc. Check if any arrays raid protection is still syncing due to a previous drive failure.

## copy and store the output of these commands in a notebook as they will be lost when the node goes down for upgrade.

lsdrive -filtervalue status=offline ; lsmdisk -filtervalue status=offline ; lsvdisk -filtervalue status=offline ; lshost -filtervalue status=offline ; lsarray -filtervalue raid_status=offline ; lsarray -filtervalue raid_status=syncing ; lsportfc -filtervalue status=inactive_configured ; lsenclosure -filtervalue status=offline ; lsenclosurebattery -filtervalue status=offline ; lsenclosurebattery -filtervalue status=degraded ; lsenclosurepsu -filtervalue status=offline ; lsenclosurepsu -filtervalue status=degraded

8. Issue this CLI command to start the upgrade process:

lsnodecanister

lsnodecanister -nohdr | while read -a ND ; do lsnodecanistervpd ${ND[0]} ; done | grep code_level ; lssystem | grep code_level

applysoftware -file IBM9840_INSTALL_1.6.1.4

svcinfo lssoftwareupgradestatus

9. Issue the following CLI command to check the status of the code upgrade process:

lsnodecanister

lsnodecanister -nohdr | while read -a ND ; do lsnodecanistervpd ${ND[0]} ; done | grep code_level ; lssystem | grep code_level

svcinfo lssoftwareupgradestatus

## Periodically check to see if anything went offline

(lsdrive -filtervalue status=offline ; lsmdisk -filtervalue status=offline ; lsvdisk -filtervalue status=offline ; lshost -filtervalue status=offline ; lsarray -filtervalue raid_status=offline ; lsarray -filtervalue raid_status=syncing ; lsportfc -filtervalue status=inactive_configured )

finderr

lseventlog -order severity -alert yes -message no -monitoring no -fixed no

10. Verify that the upgrade successfully completed, issue the lsnodecanistervpd CLI command for each node that is in the system. The code version field should display the new code level and all nodes should be back online.

svcinfo lssoftwareupgradestatus ; lsupdate

lsnodecanister

lsnodecanistervpd 1

lsnodecanistervpd # … ## do this for each node listed by lsnodecanister

## or use this script to list nodes, status and code level

lsnodecanister -nohdr | while read -a ND ; do lsnodecanistervpd ${ND[0]} | while read -a NV ; do if [[ ${NV[*]} =~ code_level ]] ; then printf "${ND[1]} ${ND[3]} ${NV[*]}\n" ; fi ; done ; done

Check if anything has gone offline using the Output stored in your notebook to compare to output after the upgrade has completed

## Store the state of hardware after the upgrade starts in the AFTER array periodically and compare to the before array

readarray -t AFTER< <(lsdrive -filtervalue status=offline ; lsmdisk -filtervalue status=offline ; lsvdisk -filtervalue status=offline ; lshost -filtervalue status=offline ; lsarray -filtervalue raid_status=offline ; lsarray -filtervalue raid_status=syncing ; lsportfc -filtervalue status=inactive_configured ; lsenclosure -filtervalue status=offline ; lsenclosurebattery -filtervalue status=offline ; lsenclosurebattery -filtervalue status=degraded ; lsenclosurepsu -filtervalue status=offline ; lsenclosurepsu -filtervalue status=degraded)

## after connection with the node is lost copy the original output of the command and paste into BEFORE array for comparison

readarray -t BEFORE < <(echo "paste here")

## Compare the before states to the after states

for ((i=0; i < $([ ${#BEFORE[@]} -ge ${#AFTER[@]} ] && echo ${#BEFORE[@]} || echo ${#AFTER[@]}); i++)) ; do printf "%-30s\t|\t%-30s\n" "${BEFORE[$i]:-'-'}" "${AFTER[$i]:-'-'}" ; done

Rerun the connectivity scripts to verify that connections are the same as before.

Appendix A – Perform Drive Upgrade (If Necessary)

- Copy the drive firmware file to the home directory

scp IBM2076_DRIVE_{date} superuser@cluster_ip:/home/admin/upgrade

lsdumps -prefix /home/admin/upgrade - Check to see which vdisks are dependent upon the drive. A vdisk may become dependent upon a single drive if RAID has failed in an enclosure.

lsdependentvdisks –drive {drive id}

lsdrive -nohdr | while read -a DRVS ; do lsdependentvdisks -drive ${DRVS[0]} ; done - Apply drive firmware

applydrivesoftware -file IBM2076_DRIVE_{date} -type firmware -drive {drive id}

Ex: applydrivesoftware -file IBM_StorwizeV7000_SAS_DRIVE_190426 -type firmware -drive 92

Ex: applydrivesoftware -file IBM_StorwizeV7000_SAS_DRIVE_190426 -type firmware -all - If the applydrivesoftware command fails with the message ” task cannot be initiated because for at least one of the specified drives, the specified file does not contain an image” then you will have to use a script to apply the software to each drive

for did in {0..120} ; do echo "upgrading drive $did" ; applydrivesoftware -file IBM_StorwizeV7000_SAS_DRIVE_190426 -type firmware -drive $did && sleep 8 ; done

## OR

svcinfo lsdrive -nohdr |while read did name IO_group_id;do echo "Updating drive "$did;svctask applydrivesoftware -file IBM_StorwizeV7000_SAS_DRIVE_190426 -type firmware -drive $did; sleep 5s;done

5. Monitor drive upgrade task completion from GUI or lsdriveupgradeprogress to monitor drive upgrade status.

lsdriveupgradeprogress | grep -Ee' scheduled' -c ; lsdriveupgradeprogress | grep -Ee'completed' -c

COMPLETE=$(lsdriveupgradeprogress | grep -Ee' completed' -c) ; TOTAL=$(( $(lsdriveupgradeprogress | grep -Ee' completed' -c) + $(lsdriveupgradeprogress | grep -Ee' scheduled|progressing' -c) )) ; PERCENT=$((200*$COMPLETE/$TOTAL % 2 + 100*$COMPLETE/$TOTAL)) ; echo "Drive Upgrade is $PERCENT % Complete"

6. Confirm drive is updated online and that no vdisks or mdisks are offline

lsdrive {drive id}

lsdrive -filtervalue status=degraded ; lsdrive -filtervalue status=offline

lsmdisk -filtervalue status=degraded ; lsmdisk -filtervalue status=offline

lsvdisk -filtervalue status=degraded ; lsvdisk -filtervalue status=offline